We rarely stop to think about the lightning speed of modern information access. Try picturing a time when answers lived only in libraries – it seems archaic now.

Search tools have become so powerful that they grasp the meaning behind your questions, not just the individual words. This capability is the result of an evolution from keyword to entity-oriented search. While it may seem complex, today we are going to break it down.

Think of a simplified world where websites are replaced by books, and answers are found by a team of 1 million dedicated workers. This analogy will help us understand the systems powering entity search, giving you a newfound appreciation for the speed and accuracy we enjoy today.

Through this exercise, you’ll understand:

Imagine you are responsible for a vast library with thousands of books and access to a million diligent workers. Unlike in a normal library, customers want answers to their questions and are not looking for books to read from front to back.

Customers constantly approach with questions (queries), eager for answers. Your mission is to find the information they need as quickly as possible.

For your library to be successful, you’ll need to return better answers that save customers time than other libraries.

Let’s imagine someone asks, “how fast is the fastest animal”?

If you were a traditional library you’d begin by scanning titles, hoping for a similarity match. The customer would likely receive a stack of books and it would be their job to read through the books and try to find the answer.

This process may take hours. Not to mention, there could be better books that just don’t get returned because their titles are too unrelated.

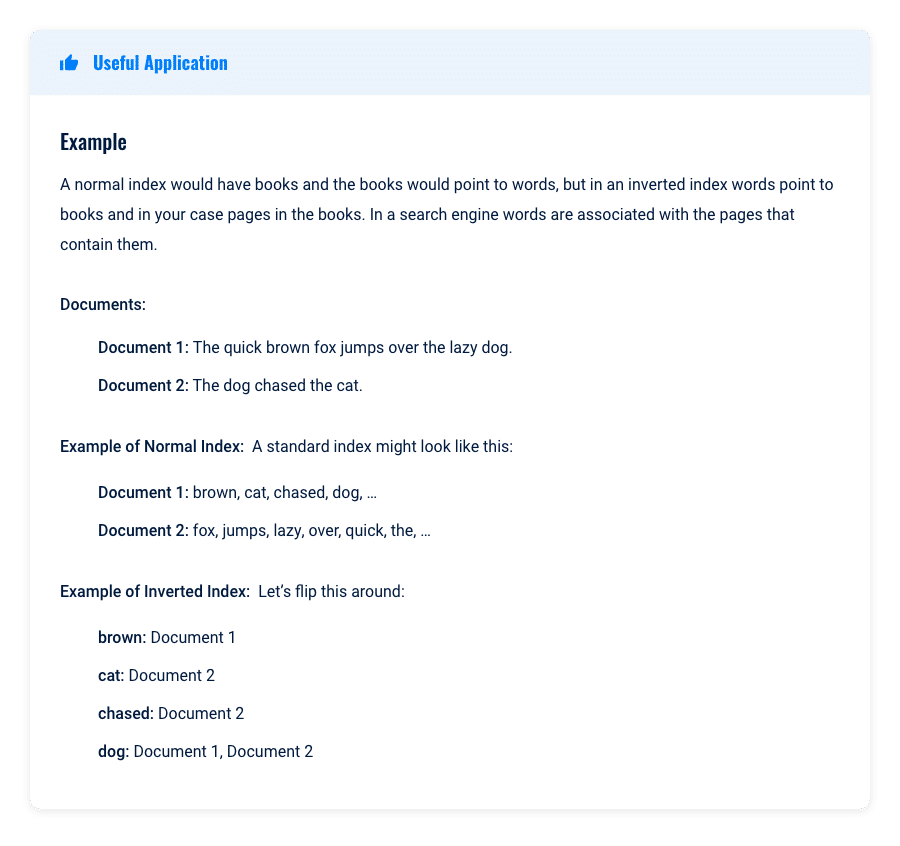

You decide this process is too slow and that this might be a task for your workforce. To accelerate things, you enlist your million-strong workforce to create a comprehensive index.

Instead of focusing on whole books or titles like your original index, they catalog each individual page. Each worker meticulously records every word on a page, along with its location.

The result is what is called an inverted index. The structure looks like this:

Now, when a customer asks, “What is the fastest animal?” your team consults the index, pinpoints “fastest” and “animal,” delivering a list of relevant pages and any page that is in both lists.

This mirrors a traditional search engine – we’re finding keywords, but we do not yet understand the deeper meanings.

Now, the customer is getting a list of hundreds to thousands of pages that may contain the answer. This saves the customer much time as they can jump to relevant pages to hopefully find their answer.

Our inverted indexes were a major leap forward, saving time for both your team and customers.

Word of your improved system spreads, and soon, patrons are lining up at the door.

However, complaints start to arise about irrelevant results and factual errors. Striving for excellence, we recognize the need to address these concerns.

Issues

A word like “apple” leads to an overwhelming response – recipes, science, you name it, are all returned. How can we address this?

This is a tricky problem, and we will need to train your workforce on a few different approaches.

The first approach that might make sense is to train the workforce to grasp context to distinguish (disambiguate) between multiple meanings of a word. For example, if “Apple” is followed by “computer” or “iPhone,” it signifies a different entity than when it’s near “pie” or “tree.”

While using contextual clues is a powerful approach, it’s deceptively difficult. Your workforce needs to learn how to identify the subtle cues that reveal an entity’s true meaning within the surrounding text. This is challenging, requiring a nuanced understanding of language and subject matter expertise that machines may take years to replicate.

To effectively employ context in distinguishing word meanings, we must first construct a robust foundation that empowers our workforce to reorganize the index.

Here are the three steps we will achieve and discuss below:

Your workforce will be trained on the following three steps to help build clues as to which entity is used in the text:

Over time, these observations form the foundation of your guidebook. It could include:

Just like search engines, this system isn’t perfect. The workforce will still encounter ambiguity, but the guidebook dramatically increases their ability to identify the correct entity based on context.

This guidebook can then be used to identify new entities and link existing text to pre-existing entities (called entity-linking).

Building a comprehensive knowledge base from scratch would be a mammoth task. Fortunately, resources like encyclopedias provide a valuable foundation.

Just like Google, we can leverage existing knowledge sources like DBpedia. DBpedia offers well-structured categories and attributes (think of these as specialized tags), giving us a head start in organizing your library’s knowledge.

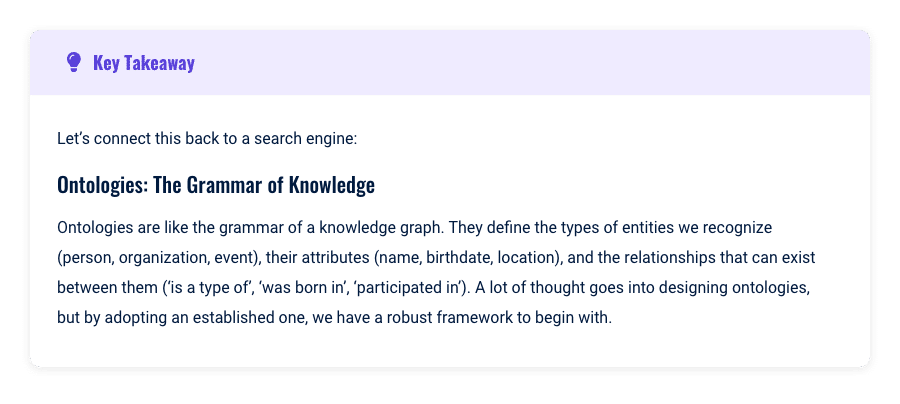

A key decision to make about your knowledge graph is what are the ontologies. We will try to develop ontologies that correspond to the types of queries we see coming into your library.

Next, your tireless workers must transform raw, unstructured information, such as the words on a page into linked knowledge. They’ll re-analyze the library’s books and incoming content, using contextual clues to identify and connect entities to DBpedia’s structure.

Example: Let’s say a page describes a cheetah’s incredible running speed. Your workers might:

Let’s quickly go through an example of the entity linking process:

Each entity and relationship your team identifies becomes a node and edge in your growing knowledge graph – a visual map of connected information!

This structured format allows us to move beyond simple keyword matching and truly understand the meaning behind text. With the knowledge graph, we can augment our index with entities, not just terms.

Unlike plain text, entities have rich attributes associated with them. This deeper understanding will empower us to analyze unstructured text more effectively, interpret user queries more accurately, and provide highly relevant answers.

Now that your workers have built this massive graph of relationships of information, the next question is how can we use this knowledge graph to augment your answering process?

This is where we begin observing the benefits of building this huge graph.

This highlights a major concept often misunderstood in SEO. Google doesn’t just hunt for exact keywords. It can understand that your page addresses a topic even if the precise keyword isn’t present.

While it’s still wise to include variations, thanks to entity understanding, well-written pages can organically rank for related terms you haven’t explicitly targeted.

Imagine a customer asking, “What year did Steve Jobs found Apple?” Your system excels at identifying “Apple” as the company.

However, it might mistakenly prioritize the book “10 Secret Hacks to Growing Your Business,” simply because it briefly mentions “Steve Jobs founding Apple” on page 93.

Since we can’t fact-check every book, we might be concerned that a book about business hacks may not be a reliable source of information on Apple. This could hurt your reputation.

We want customers to find books that spark their interest in further reading about their chosen topic. To solve this, we’ll develop a system that classifies and organizes your books by theme. This way, we can match users’ questions with thematically relevant books.

Our workforce will analyze both the title and table of contents to determine the book’s focus. We’ll also use your knowledge graph to verify that the topics are accurately related to the user’s search, ensuring the results we provide are relevant and helpful.

By carefully classifying books using their table of contents, we can pinpoint the specific categories that best serve particular search topics. This lets us prioritize reliable sources of information, giving a boost to books with a proven track record of expertise.

Linking this back to a search engine, this is the foundation for concepts such as topical authority.

Our new system could stumble when encountering books with overly broad topic coverage in their table of contents. For now, we’ll label these “uncategorized” and avoid boosting them in search results, ensuring we don’t mislead customers.

Our indexing team has built a powerful system, and customers love the improved results.

However, millennials are frustrated when searching for books defining the term “cap” – your system doesn’t recognize this slang usage. It seems Gen Z authors are driving this new language trend, and we need to ensure your system keeps pace with evolving information.

Knowledge is constantly changing. Therefore, we’ve formed a team dedicated to identifying truly new information – scientific discoveries, groundbreaking inventions, or emerging celebrities.

Their mission is twofold:

Our final step is implementing a new paradigm that will help our library as we progress into the future. Our workers are fantastic, but a million salaries are a burden.

Let’s empower authors to streamline the process. We’ll create a structured language, similar to Schema markup, that authors can use to clearly communicate key information.

At the front of every book they can create tables that clearly identify different types of information that are in the book. This will allow our workforce to save time and determine what pages are available without reading them in depth. It will also allow our team to return tables of information to customers instead of pages.

This shift away from plain text (unstructured data) will make your indexing team’s job much easier, freeing them up to tackle the influx of those exciting new Gen-Z books.

This saves us time, so we also reward authors who use it with enhanced content and preference on the stack we send to customers. Now, we’ve completed your entity-oriented library!

We transformed a traditional library into a lightning-fast information retrieval system. Had we done this 30 years ago, we might be billionaires.

This simplified example shows how we evolved from basic title matching to a system that truly understands the user’s intent. We even developed a structured language (think of it like schema markup) to streamline information processing. This lets your team quickly grasp a book’s core content, potentially improving how we rank results.

While we haven’t touched on the complex topic of page scoring (the rank order in which we should send documents back to customers), we’ve achieved something remarkable. We can now pinpoint the most relevant documents, even if they don’t use an exact search term.

Let’s distill your newfound knowledge into actionable SEO takeaways: